Physical containers vs. software containers

To understand containerization let’s think about physical containers for a while. The modern shipping industry can effectively transport cargo thanks to containers.

Imagine how difficult it would be to transport an open pallet with smartphones together with pallets of food. Instead of having ships specialized on transporting certain kind of cargo, we just put all things in separate containers and send them all together on the same ship.

Containerization explained in the IT world works basically the same way. Instead of shipping full operating systems and your software, you pack your code into a container that can run anywhere. Since these containers are usually pretty small, you can pack a lot of containers onto a single computer.

What is a container compared to a virtual machine?

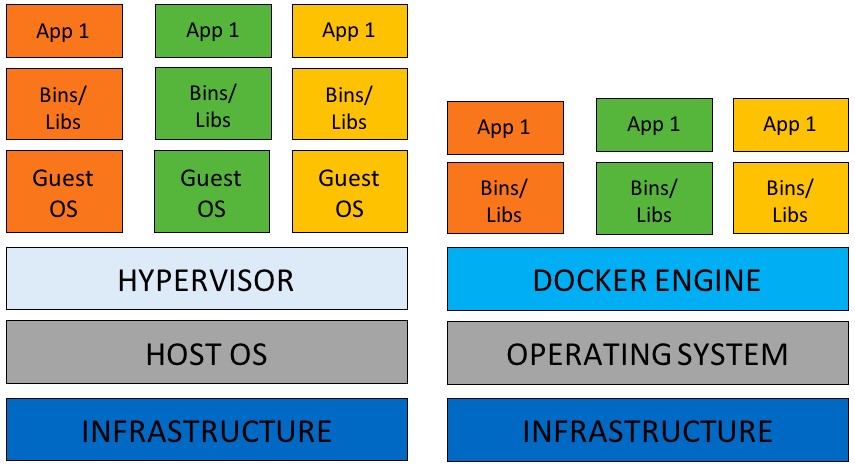

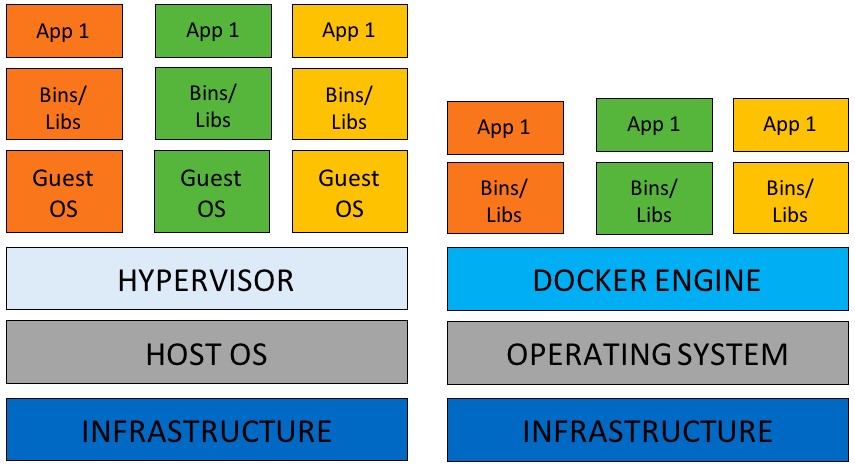

Sometimes, a container is confused with a virtual machine because they work in a similar way: isolating applications without the need for physical hardware. However, its main difference lies in its architecture. Containers are isolated from an operating system and the manipulation with them is easier. We can understand them as a light form of a virtual machine.

A container as a virtual machine has isolation, that is, a space reserved for data processing, authorization as root, can mount file systems and more. However, unlike virtual machines that are managed with separate operating systems, the containers share the kernel of the host system with other containers, as shown in the following diagrams.

Figure 1. Comparison between a VM and a Container architecture

What is a hypervisor

A hypervisor is a special software capable of emulating a client PC and all its hardware resources. Hypervisors run on physical computers, also called host machines. There are two hypervisor types: a hosted hypervisor and a bare metal hypervisor. While a hosted hypervisor does not control the hardware drivers, the hypervisor does not need an operating system to run.

How containerization works

The main piece in a container architecture is something called Docker. A Docker is an open source based on the Linux kernel that is responsible for creating containers in an operating system as we have seen in Figure 1. At the Master we offer virtual managed servers with KVM virtualization that supports docker containerization.

By accessing a single OS kernel, Docker can manage multiple distributed applications, which run in their own container. In other words, containerization is based on the software package that is implemented in a single virtual shipment.

The containers are created from Docker images. Although the images are read-only, the docker adds a read-write file system to the image-only file system to create a container.

When a container is created, Docker starts a network interface that communicates the container with the local host. Then adds an IP address to the created container and executes the indicated process to execute the application assigned to it.

When implementing containerization, each container has all the necessary parts to execute a program: files, libraries and all the variables that allow an environment to be executable.

As we mentioned earlier, unlike virtual machines, containers do not need to have a different operating system. This feature makes them faster and lighter since they consume fewer resources from a server or the cloud.

Docker engine: the soul of containerization

The Docker engine is a software layer in which a Docker is executed. In summary, it is a lightweight execution engine that manages containers. It runs on Linux systems and consists of a Daemon Docker running on the host computer, a Docker client that interacts with Dameon Docker to execute commands and a REST API to communicate remotely with the Daemon Docker.

Benefits of containerization

Containerization has optimized the virtualization in comparison with virtual machines by reducing the number of resources and execution time. Also, companies save money because they don’t need several versions of operating systems with their respective licenses. Just as it occurs with VMs.

On the other hand, containers allow multiple applications to run on a single machine. Why? Because the kernel of the operating system is shared. This approach is much more attractive from the business point of view because of the ease to create applications, assemble them and move them. Some other benefits of containerization are the following:

Portability

Containerization can run on any desktop or laptop capable of carrying out a container environment. Because applications do not need the host operating system, they are executed faster.

Virtually anyone can package an application on a laptop and test it immediately without modifications in a public or private cloud. Both the application environment and the operating environment remain clean and minimal.

Scalability and modulation

Containers are lightweight and do not overload. Thanks to this ability, containers serve to scale applications through groups of systems that increase or decrease services according to demand peaks. One of the best tools to perform scalability in containers is Kubernets from Google. Kubernetes allows to automatically control the workload of the containers, their interaction, and implementation.

Speed

What makes a container faster than a VM is that by being isolated space environments executed in a single kernel, take fewer resources. Containers can run in seconds, while VMs need more time to start each one’s operating system.

Docker Hub images

Docker Hub has thousands of public images that anyone can easily use. The image library allows you to find almost any image you need for your containers according to the specific needs of your applications.

Isolation and regulation

In containerization, the applications are not only isolated from each other, but they are also isolated from the underlying system. It is easier to control an application within a container and the system resources. Also ensures that both data and the code remain isolated.

Do you know that...?

In Master Internet, we propose advanced cluster construction to clients that require extreme availability and performance for their project. We have experiences with both clusters operated within one datacenter and geographically separate locations.