In the previous article of this series, we furnished a data center with network technologies. But now the tech and fancy double floor is just collecting dust. How can we breathe life into the data center, make it host our company apps and thus fulfilling its purpose?

This third and final part of the How to build a data center series will go through the tpics of virtualization, cloud and the mantra of high availability. Until now, we have invested a lot of money into building a data center, but there’s still one definitely large expense waiting for us – the disk array.

Server virtualization has many benefits

To understand the benefits of cloud better, it’s often good to start from an entirely different perspective – what problems could we face while using regular physical servers? One of the most common one is a failure of one or more server hardware components. Such a problem often needs an on-site technician to get fixed. Let’s assume the technician does not live in the data center, but maybe in an entirely different part of the city. It will take him some time just to reach the data center. But throughout all this the company apps are down. The time it takes to repair can be longer if he needs to restore backups from through on a different hardware, because now the new hardware needs its drivers installed.

All this is solved by virtualization of servers, or in other words, through the separation of user interface and physical hardware. An operating system in a virtual machine doesn’t actually “see” the hardware itself, just a virtual network card and a virtual drive controller and so on. The virtual hardware is to a point independent of the physical, so even when we need to restore a backup on a different machine, we still get an entirely identical environment.

Apart from driver troubles, virtualization elegantly solves the problem of upgrading hardware and lengthens the life of our OS. In the past, hardware upgrade often necessitated a change to a new version of software as well. Virtualization makes it possible to often change hardware without crippling your carefully tuned and well-running system.

Yet another choice: Picking the right hypervisor

Even if you’ve already decided to use virtualization and reap all its benefits, you still have yet another choice ahead of you – choosing a hypervisor. There are two types of full virtualization that differ in the location of hypervisor. The first is bare-metal hypervisor, where the hypervisor gets installed instead of an operating system and fully replaces it. A well-known hypervisor of this type is VMware ESXi. Its main advantage is its size and simplicity – the server focuses on one task and does it well. Updates don’t come as frequently as with regular operation systems, so servers can run longer without a restart. The main disadvantage is its limited hardware support, so you would do well to check for server compatibility before you buy them.

The second type of hypervisor runs in the regular environment of the operation system, so it’s called hosted hypervisor. Good examples are Oracle VirtualBox or VMware Workstation. The advantage of this kind of virtualization is the possibility to use any hardware that can run the preferred operation system.

Hosted hypervisors are gradually getting more and more similar to their bare-metal counterparts. Microsoft started to offer Hyper-V Server, an edition of their Windows Server OS modified purely for virtualization. KVM has been part of the mainline kernel of Linux since 2007. These specialized systems try to cherry-pick the best out of both approaches, so the difference between bare-metal and hosted hypervisors gets slowly rubbed away.

Benefits of the cloud are substantial

Are you pondering the difference between virtualization and cloud computing? Think of the virtualization as of a means to an end. It separates the end-user from physical hardware and offers him computational resources. Cloud is a product that allows him to easily consume these resources as a service.

The end-user of a cloud doesn’t rent a specific server, but resources in general. On an infrastructure level these are usually CPU performance, RAM, storage space and network access.

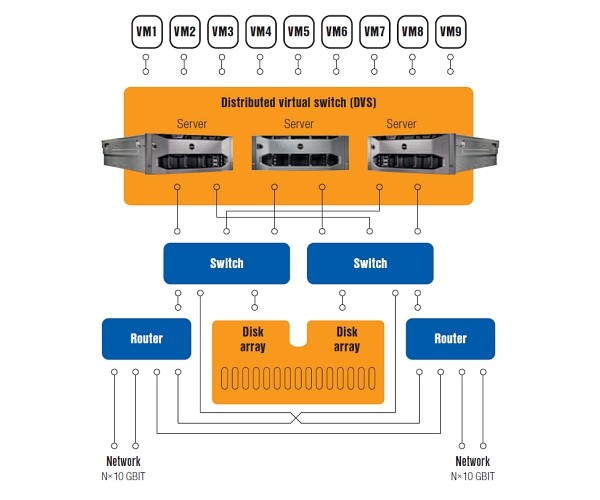

A cloud can run indefinitely thanks to its robust failover technology. It introduces redundancy to every level of network components, from servers to disk array controllers.

Cloud computing can be very useful in a company where different organizational units share the same infrastructure. The virtualization then isolates them from each other and a suitable software can report use of the infrastructure of the divisions. A central IT division can oversee the process and make sure that some part of the infrastructure is not overburdened and that the resources are going where they are needed. This approach to cloud inside a company is called private cloud.

As the name suggests, there are also public clouds. These work in a similar fashion, only instead of different divisions of the same company there are different subjects and the central IT division is replaced by the cloud service provider. They oversee the usage of the infrastructure which then forms the basis of calculating the monthly rental fees for the cloud.

Migrating virtual servers between private and public cloud is usually quite straightforward even across platforms. There are open standards available, such as OVA or OVF, and conversion tools that help with the move as well.

Running apps in the cloud

When building a data center from scratch, you have a completely free choice of both platform and operating system. If a company already has some IT infrastructure, it should carefully plan ahead.

Modern – i.e. still supported by manufacturers – operation systems usually offer support for virtual hardware. This makes it possible to move a physical server into a virtual environment in its current form, or just after installing a few drivers. This can be done by special convertors, simple copying or through backup restores.

A much worse situation occurs with so-called legacy systems. These are no longer supported, don’t offer recent drivers and IT departments generally avoid touching them at all. Almost every business has at least one of these skeletons in their closet and a migration into the cloud is a good opportunity to kick them out.

If this is not at all possible, at least make sure to give the new machine the most compatible virtual hardware there is. For example, a virtual network card VMware that uses Intel’s E1000 drivers has become a legend of sorts for its abilities to work with almost anything.

When not to migrate at all?

There are also cases where it’s either not financially viable or general possible to move the company infrastructure into the cloud. Primarily it’s not advised to migrate applications that need more resources to run than is the total performance of the most powerful server in the cloud. This can easily occur with databases. The benefits of virtualization are overshadowed by the overhead of running the hypervisor unnecessarily.

Complications could also arise when dealing with apps that need extremely low network latency, as do servers running online games or stock exchange software.

And lastly it is best not migrate systems that work with actual specific physical hardware, for example a USB key or a chip card reader. Even though these could be run in the cloud, the hardware needs to be mapped to a specific virtual machine and this in turn ties the machine to one physical server, defeating the whole point.

The hard part is over, now you just need experts to run it

Together, we did it. A theoretical empty plot of land has evolved into a working data center. We have chosen the right hardware and built a cloud, then migrated the company’s apps into it. This increased the quality, availability and life-expectancy of our IT infrastructure. Any problems can be solved faster and more effectively than before.

But we still need experts to take care of technology old and new alike. If you’re not ready to do such a large project on your own, or just lack the right people, don’t be afraid to ask for help from more experienced colleagues or providers. The good ones offer a first consultation free and with no strings attached.

The article was previously published in the professional journal IT Systems, issue 12/2014